Available via

I/O graph 1

I/O graph 2

I/O graph 3

I/O graph 4

Choose models to compare

Publications (1)

Overview

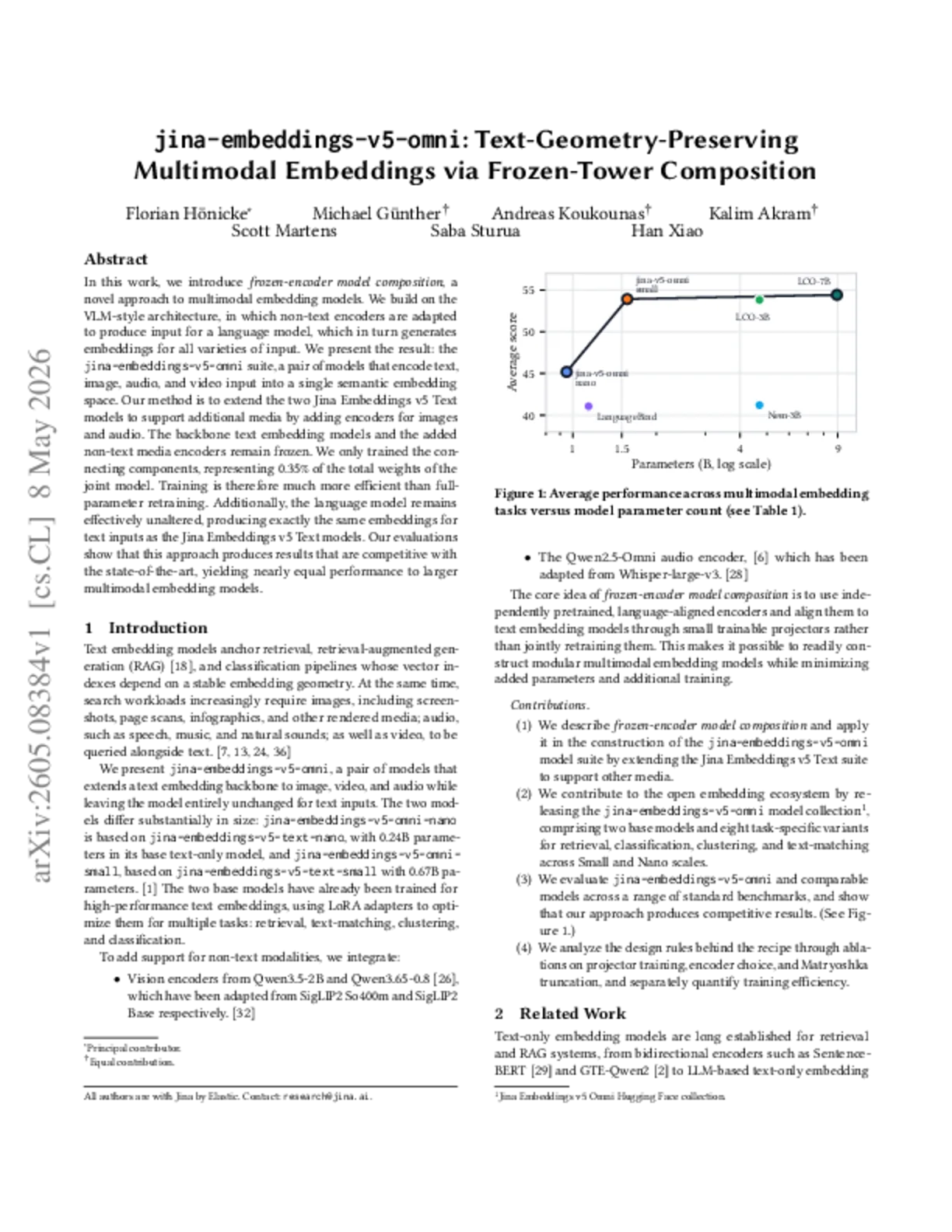

jina-embeddings-v5-omni-nano (~1.04B parameters) is the compact variant of the v5-omni family, designed for edge and commodity hardware. It extends jina-embeddings-v5-text-nano with the same multimodal capabilities: text, images, video, and audio inputs in a shared vector space. Text-only outputs are bit-identical to jina-embeddings-v5-text-nano. The model produces 768-dimensional embeddings with Matryoshka truncation down to 32 dimensions and supports 8K token context length.

Methods

Follows the same third-stage training as omni-small, extending jina-embeddings-v5-text-nano. The EuroBERT-210M text backbone and LoRA adapters are frozen. Cross-modal projectors connect a SigLIP2 Base vision encoder and Whisper-large-v3 audio encoder to the text backbone. Training data and objectives mirror omni-small.

Performance

Text-only performance is bit-identical to jina-embeddings-v5-text-nano. Multimodal performance is slightly below omni-small due to the narrower embedding space (768 vs 1024 dimensions) and smaller text backbone, but maintains strong cross-modal alignment. Optimized for CPU and edge hardware where the larger omni-small model cannot run.

Best Practice

Same usage pattern as omni-small with identical LoRA adapter selection and multimodal input handling. Key differences: 768-dimensional output space (Matryoshka truncation down to 32) and 8K context window. The nano variant runs on commodity hardware without GPU acceleration. Text-only embeddings are drop-in compatible with jina-embeddings-v5-text-nano.

Blogs that mention this model