Available via

I/O graph 1

I/O graph 2

I/O graph 3

I/O graph 4

Choose models to compare

Publications (1)

Overview

jina-embeddings-v5-omni-small (~1.74B parameters) is a multimodal embedding model that accepts text, images, video, and audio and produces embeddings in a shared vector space aligned with jina-embeddings-v5-text-small. You can index with text and query with any modality, or vice versa, without reindexing. The text backbone and all four task-specific LoRA adapters (retrieval, text-matching, clustering, classification) are frozen during multimodal training, so text-only outputs are bit-identical to jina-embeddings-v5-text-small. The model produces 1024-dimensional embeddings with Matryoshka truncation down to 32 dimensions and supports 32K token context length.

Methods

Trained in a third stage extending jina-embeddings-v5-text-small. The text backbone and all four task-specific LoRA adapters are frozen; only the cross-modal projectors are newly trained. A SigLIP2 So400m vision encoder handles images and video (32 uniformly sampled frames). A Whisper-large-v3 audio encoder handles audio input. PDF pages are rendered as images and processed through the vision pathway. Training uses contrastive loss with cross-modal hard negatives to align visual and audio representations with the existing text embedding space.

Performance

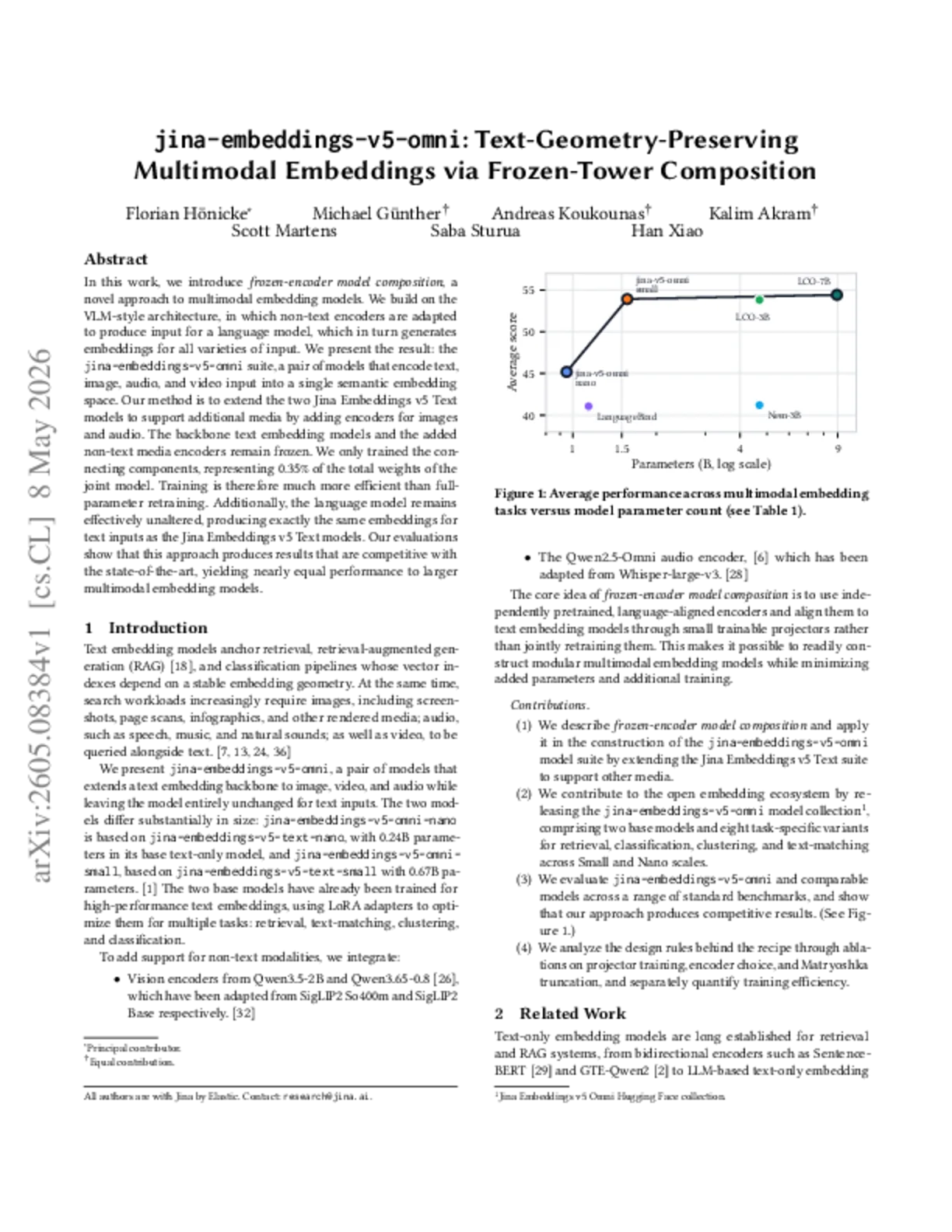

Text-only performance is bit-identical to jina-embeddings-v5-text-small — the text backbone and LoRA adapters are untouched during multimodal training. On cross-modal retrieval, the model demonstrates strong alignment across text-image, text-audio, and text-video tasks. PDF page retrieval is handled through the vision pathway. The omni-small model offers the best accuracy-efficiency tradeoff among Jina multimodal embedding models for server deployment.

Best Practice

Same four LoRA adapters as v5-text-small: retrieval, text-matching, clustering, and classification. For multimodal inputs via the API, pass image URLs, audio file URLs, video file URLs, or PDF URLs directly — the model routes each modality through the appropriate encoder. Supported audio formats include WAV, MP3, FLAC, OGG, M4A, and Opus. Video inputs are processed as 32 uniformly sampled frames. Mix modalities freely within a single batch: the embedding space is shared across all modalities. Use cosine similarity for comparison. Matryoshka truncation from 1024 to 32 dimensions is supported. Text-only embeddings are drop-in compatible with jina-embeddings-v5-text-small — no reindexing needed when upgrading.

Blogs that mention this model