We showed you, in a previous post, how to integrate Jina AI’s search and reader APIs with DeepSeek R1 to build a deep research agent, but it took a lot of custom code and prompt engineering to make it work. In this post we’ll do the same thing using the Model Context Protocol (MCP), which uses a lot less custom code and is portable to different LLMs, but is still subject to a few pitfalls along the way.

To build our agent, we'll work with our recently released MCP server, which provides access to Jina Reader, Embeddings and Reranker APIs along with URL-to-markdown, web search, image search, and embeddings/reranker tools.

tagAgents and Model Context Protocol

There’s been a lot of digital ink spilled about agents and agentic AI of late, often either hyping them up (like Gartner, who expect by 2028 about 15 percent of daily work decisions will be made autonomously by AI agents) or tearing them down (like Vortex who claim most agentic AI propositions lack significant value or return on investment).

But what even are agents? One of the better definitions (from Chip Huyen via Simon Willison) is:

[Agents are] LLM systems that plan an approach and then run tools in a loop until a goal is achieved

That’s the definition we’re going with for this blog post. And those tools that the agent uses? They’re connected with Model Context Protocol. That protocol, originally developed by Anthropic, is becoming the lingua franca for connecting LLMs to external tools and data sources. This means that agents can chain together multiple tools in a single workflow. The result is agents that can plan, reason, and act by orchestrating a suite of APIs.

For example, we could build a price optimization agent that collects competitor product pricing for comparison and price optimization. Then we could outfit the agent with Jina AI’s MCP server, a prompt, and a list of competitor products, letting it generate an actionable report with scraped data and links to sources. By using additional MCP servers, the agent could export that report to PDF format, email it to stakeholders, store it in an internal knowledge base, and more.

In this post, we’ll build three example agents with our MCP server, which provides the following tools:

primer- Get current contextual information for localized, time-aware responsesread_url- Extract clean, structured content from web pages as Markdown via Reader API (also available as a parallel version)capture_screenshot_url- Capture high-quality screenshots of web pages via Reader APIguess_datetime_url- Analyze web pages for last update/publish datetime with confidence scoressearch_web- Search the entire web for current information and news via Reader API (also available as a parallel version)search_arxiv- Search academic papers and preprints on the arXiv repository via Reader API (also available as a parallel version)search_images- Search for images across the web (similar to Google Images) via Reader APIexpand_query- Expand and rewrite web search queries based on the query expansion model via Reader APIsort_by_relevance- Rerank documents by relevance to a query via Reranker APIdeduplicate_strings- Get top-k semantically unique strings via Embeddings API and submodular optimizationdeduplicate_images- Get top-k semantically unique images via Embeddings API and submodular optimization

We’ll also need an MCP client (VS Code with Copilot, since it's free and widely used), and an LLM (Claude Sonnet 4, since it gave the best results in our testing).

tagUsing the Jina AI MCP Server

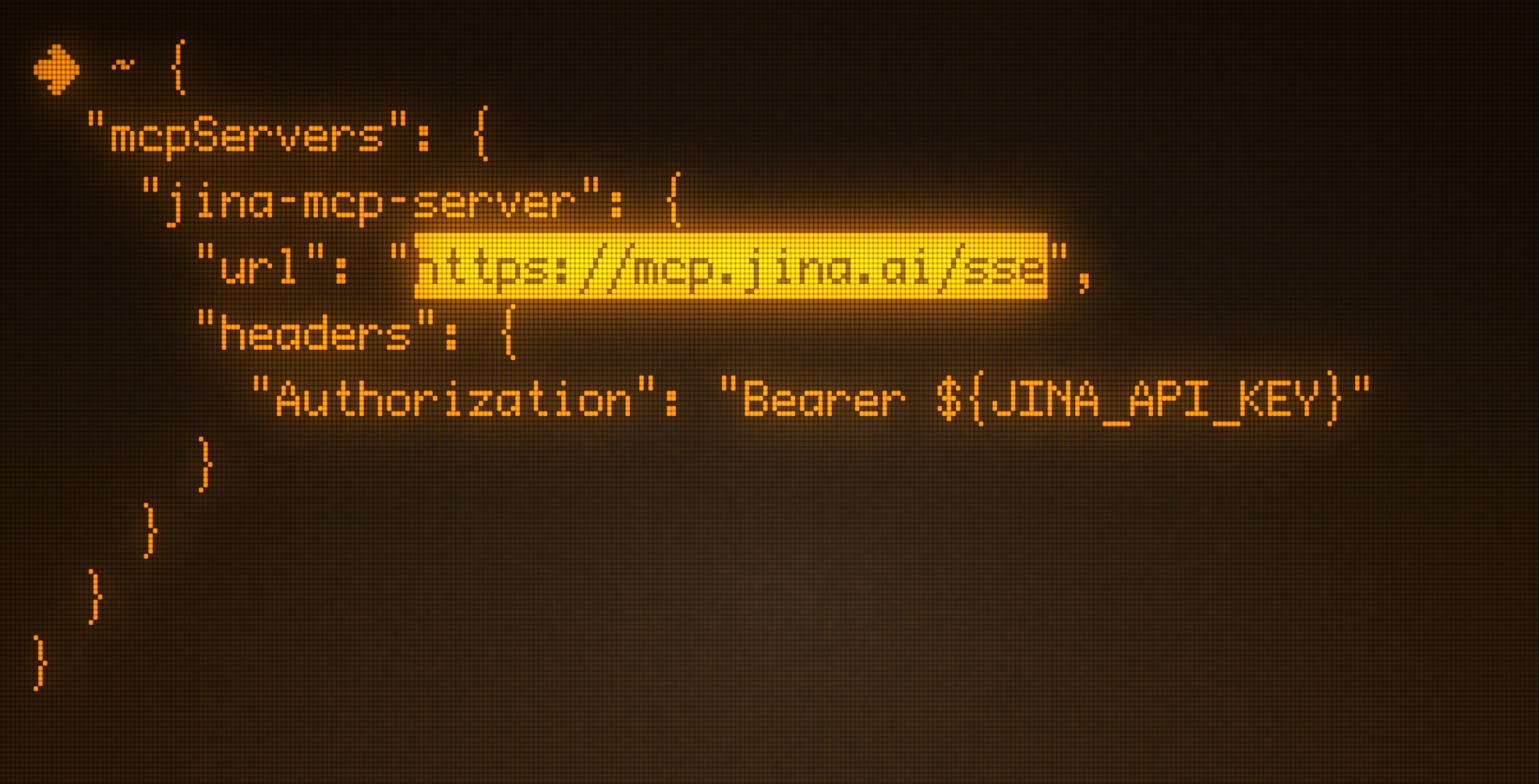

MCP has been described as a universal plugin system, supporting multiple programs like Claude Code, VS Code, Google Gemini CLI, and the desktop front-ends for Claude and ChatGPT. Connecting up an MCP server is typically just a matter of editing your settings file to include the following:

{

"mcpServers": {

"jina-mcp-server": {

"url": "https://mcp.jina.ai/sse",

"headers": {

"Authorization": "Bearer ${JINA_API_KEY}" // optional

}

}

}

}

To test that the MCP server works, let’s use a simple prompt:

Give me a summary of the latest post on jina.ai news in the

form of a single verse sea shantyOur agent does the following:

- Call

read_urlto scrape https://jina.ai/news - Call

search_webwith the query"site:jina.ai \\"Optimizing GGUFs for Decoder-Only Embedding Models\\" August 2025" - Call

read_urlto scrape the blog post - Generate a one-verse sea shanty from the scraped data:

🎵 *To the tune of a traditional sea shanty* 🎵

**"The GGUF Sailor's Tale"**

*Oh, they sailed with their embeddings, three billion strong and true,*

*Through quantized waters deep and blue,*

*With L4 GPU winds at their back,*

*Four thousand tokens per second on their track!*

*Heave away, me hearties, strip the vision tower down,*

*For GGUF optimizations are the finest ports in town!*

Now that we’ve seen that it works in practice, let’s really put it through its paces by building some useful real-world examples.

tagExample 1: Daily arXiv Paper Summaries

Reading the latest academic papers is part of our jobs at Jina AI. But filtering out the truly relevant ones and extracting the most important information from each can be a real chore. So, for our first experiment, we automated that task by creating a daily digest of the most relevant recent papers. Here’s the prompt we used:

Using only Jina tools, scrape arxiv for the papers about

LLMs, reranking, and embeddings published in the past 24

hours, then deduplicate and rerank for relevance, outputting

the top 10. For each one, scrape the PDF and extract the

abstract. Then summarize it and organize the information you

gathered into a "daily update". Include a link and publication

date for each paper.Our agent:

- Searches for relevant arxiv.org papers (using the

parallel_search_arxivtool) with the query stringslarge language models LLM,reranking information retrieval,embeddings vector representations,transformer neural networksandnatural language processing NLP - Removes duplicates (using the

deduplicate_stringstool) - Reranks the results (using

sort_by_relevancetool), outputting only the ten most relevant results. - Retrieves the URLs to the PDFs for the reranked results (using

parallel_read_url), split into two batches of five. - Reads each URL (using the

read_urltool, called ten times) - Generates a detailed report, including abstracts, summaries, trends, and insights, implications for future research, research gaps, and conclusions.

We occasionally ran into the problem that the agent didn’t limit its results to the past 24 hours. Prompting it one more time to follow that instruction resulted in the report above.

tagExample 2: Market Research Agent

For our next experiment, we'll have our agent write a competitive intelligence report about a notable video game company (name redacted). Here's our prompt:

Create a comprehensive competitive intelligence report for

$GAME_COMPANY focusing on their recent activities in retro

indie games. Use Jina tools to search for the latest news,

press releases, and announcements, then extract clean content

from their official communications. Rank all findings by

business relevance and remove any duplicate information.

Present insights on their strategic direction, product

launches, and market positioning changes over the past

quarterOur agent:

- Runs several loops of

search_webandread_urlto gather research. - Reranks its findings using

sort_by_relevance, outputting the top ten results. - Generates a market intelligence report, including executive summary, critical business developments (ranked by strategic importance), strategic decision analysis, and many other sections.

tagExample 3: Legal Compliance Research

As we said earlier, one of the useful aspects of MCP is that we can use multiple servers to get more complex outputs. In this case, we’re using the PDF Reader MCP Server in addition to our own to create a research report on the current state of AI legal compliance in the EU and the USA. We used the prompt:

Develop a knowledge base section focused on AI legal

compliance news and common pitfalls in the EU and USA as of

this moment. Report should be aimed at AI startups in EU.

Apply Jina MCP tools extensively: perform parallel web

searches and URL reads to efficiently extract detailed

content, deduplicate semantic overlaps, and rerank to surface

the most authoritative information. Cite all sources with

URLs and publication or update dates. Organize content

clearly and produce a formatted PDF document ready for

immediate use.Our agent:

- Performs a parallel search operation for general information (using

parallel_search) with the queriesEU AI Act 2024 compliance requirements startups legal obligations August 2025,USA AI regulation Biden executive order compliance requirements 2024 2025,AI startup legal pitfalls Europe GDPR data protection compliance 2025,AI liability insurance compliance requirements EU USA startups 2024 2025andAI ethics governance framework startups EU USA regulatory updates 2025, returning 25 results for each query. - Deduplicates the returned URLs (using

deduplicate_strings) - Reads the contents of four URLs (using

parallel_read_url) - Performs a further

parallel_searchfor more specific information, with the queriesAI startup common compliance pitfalls mistakes EU USA 2025,AI liability insurance cybersecurity startup requirements 2025,AI bias discrimination testing requirements EU AI Act compliance startups,AI data protection GDPR violations penalties startups 2025 - Uses

parallel_read_urlto read through a further four URLs - Generates a report in Markdown format and converts it to an 18-page PDF

We also had to do a little further prompting to improve PDF metadata and formatting, as well as to make it more of a report instead of a very long bulleted list, but that’s something we would integrate into the prompt for future reports.

tagAlternative Approaches

Before going with Claude Sonnet 4, we tried a range of Ollama models that supported tools, including Qwen3:30b, Qwen2.5:7b, and llama3.3:70b. For the MCP client, we initially used ollmcp before jumping to VS Code. All of the above models failed in the same way, no matter how explicitly we prompted them with the tools and how to use them: When asked to perform even a simple task, like retrieve the latest blog post from Jina AI, each model (no matter the size or vendor) would consistently:

- Go into a lengthy reasoning loop, constantly second-guessing themselves (and using up tokens) until they finally resolve to just do as they were told

- Call

read_urlfor https://jina.ai/news - Inspect the blog post titles and excerpts

- Completely hallucinate that they scraped the latest post (without even calling

read_urlfor that page) - Present a summary based on the excerpt from the top search result (not the actual page content)

- When questioned, claim they followed the instructions exactly and scraped the page as requested

Models in the Claude, GPT and Gemini families provided acceptable output, though we pretty quickly landed on Claude Sonnet 4 as it made extensive use of tools (often reaching for parallel tooling options rather than the serial approach favored by GPT-4.1) and generated longer, better-structured output.

tagConclusion

There's still a lot of fuzziness around the term "agentic AI", but MCP represents a step towards making it something solid and practical. In our experience, agents aren't quite ready for prime time just yet, with the LLM generally being the weak link, but with a little hand-holding and experimentation, it's possible to get good results. That said, when you do get the right combination of prompt, LLM, and MCP servers, you can see agents reliably execute multi-step tasks with no custom code needed — something that was much harder with previous models like DeepSeek (that don't support tools), which required more manual engineering and resulted in brittle integration.

Despite these current limitations, the trajectory is promising. The MCP ecosystem is growing rapidly, bringing more integrations and tools that make it easier to mix and match APIs, such as Jina's, or to swap in new LLMs as they become available. As both the underlying models improve and the tooling ecosystem matures, the gap between experimental agents and production-ready agentic AI continues to narrow, making robust implementations increasingly accessible for real-world applications.